My Internship at Arçelik

- Sep 28, 2023

- 5 min read

Updated: Aug 30, 2025

Hello!

Today I'm going to share my internship experience in Arçelik's Advanced Robotics lab. It was my first internship and I included several different projects and learned a lot. Mostly I spent my time dealing with a well-known problem in robotics, namely eye-in-hand problem. Other than that I also had the chance to work with Arçelik's machine vision cameras, and even I developed a basic program for them, which (I hope) they will be using. Below I will explain them in detail.

Every person in the lab was always eager to help, teach, and may be most importantly share their experiences. I never hesitated to ask a question, and they always tried to answer, either directly telling or directing me to someone or a resource. My supervisor, Sadettin Esenlik (Electrical and Electronics engineer | Senior Team Lead) was always there whenever I need. He helped me a lot with understanding the basic concepts of robotics, and the project I worked on.

Eye in Hand problem

We see with our eyes and use our hands for many things (for example writing sentences on the keyboard or moving things). The eye-in-hand problem has a similar meaning in robotics. Eye refers to a camera and hand refers to the robot's arm. What we understand from these three word (eye-in-hand problem), when they come together there occurs a problem. This problem is well-known in robotics, and it is a calibration problem, named an eye-in-hand problem.

What we mean by calibration in this context is having the ability to represent the same thing (a point) from the view of the eye and the robot. We need to calibrate the camera with the robot so that we can merge the photos taken by the camera. Just a second, what is the point? Let's stop at this point and take a look at what point means in robotics, and more generally, how a basic robot works.

What is a robot?

A robot is defined as "A machine that can perform a complicated series of tasks by itself." by the oxford dictionary. There are many types of robots ranging from self-driving cars to medical operating robots [GIVE MANY IMAGES], from drones to industrial robots. A robot needs to be programmed to function correctly, and to correctly program it, a coordinate system is needed, because it will operate with the world. In this manner, it is usually said that a robot has a world, and its coordinate system is referred to as the world's coordinate system, a coordinate system that is how a robot realizes and operate with its world.

In my internship, I especially worked on industrial robotics that automatize the manufacturing process by changing manual tasks previously done by people with automatized robots. Arçelik is one of the best places to work on industrial robotics because their main job is to manufacture their products, which they have already automatized many parts of their factories.

This factory has the potential to produce more than 4,000 washing machine one day, The manufacturing never stops.

Let’s continue with robots. I already said that I worked with industrial robots. Let’s a little bit more dive into it.

Industrial Robots

In the industrial manufacturing process, there are many different tasks, and these tasks can change according to the product that is being produced at the time. Although it has a range diversity of different tasks, there are some patterns as well, e.g. moving things from one place to another place or mounting screws. This is where industrial robotics comes into play, and offers automation and reliability.

To achieve this kind of task, an industrial robot needs to have different (changes according to the task and the environment it will be put into use) degrees of freedom (DOF) because this degree shows how easily the robot can move in its world. What we mean by this degree is the number of axes it has. With axis, we mean how many different parts the robot has that independently (not in a fully independent manner) move from other parts.

We can say that the bigger the complexity of the task, the bigger the DOF robot has to have. We live in a 3 dimensional (3D) world. This means that we can choose a point (a location that has no shape, length, thinness, or size by definition), and call it the origin. Then it is possible to model our world by referencing the point chosen as the origin. Below see a basic example 3D coordinate system.

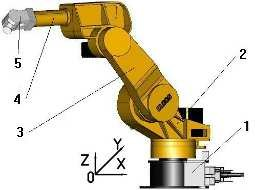

Because our world is 3D and the tasks that robots can handle are from the real world, we need to model and program our robot according to a coordinate system. This coordinate system is called the world’s coordinate system, and the origin is usually the base of the robot. Below see the base of a robot.

Because our world is 3D and the tasks that robots can handle are from the real world, we need to model and program our robot according to a coordinate system. This coordinate system is called the world’s coordinate system, and the origin is usually the base of the robot. Below see the base of a robot.

As can bee seen from the above figure, this robot has 5 axes, meaning that this 5 axes can move freely from each other except for some limitations, meaning that at a certain limit position for an axis can set a range for another axis.

My project

Now I would like to talk about the project I worked on. It is an ongoing research project at Arçelik, promising a wide range of uses and decreasing the manufacturing time while giving better reliability. Please see the robot we have used during experiments for this project.

Because it is an ongoing research project, I should not share sensitive information about it, but as you can see from the end effector of the robot, it has a camera. The camera is very, very sensitive. It is based on Structured Light Projection and has a little range but it can measure anything that falls down its range.

The lab has ongoing, future, and past projects related to computer vision, so they use machine vision cameras a lot. When I arrived at the lab for my internship, they had just started using a new camera with the nearly same quality but very low price.

The problem here is that they need a little software that does their job, e.g. taking the pose from the camera to local, computer, or server, etc. Although the camera itself offers software it is so huge that it is impossible for them to use it in their applications because it spends computing and network resources without a need.

So I and another friend (intern) designed a basic Python program that does their job according to the given settings, e.g. exposure rate. Finally, we also add some funny code that detects QR codes :). Below find some photos.

My presentation

Lastly, below find a photo from the presentation I made to the Lab about my project, what I have done, what I tried, and what I am thinking of applying in the coming weeks.

That is the end of the story. Overall, it was an amazing journey for me. I took part in different projects, researched, and learned a lot that I believe I will use throughout my career. Thank you Arçelik for providing this opportunity!

Stay well!

Comments